“Words carry weight” is more than a catchy phrase for Sujal Deoda; it is the guiding thread of his research at the International Institute of Information Technology, Hyderabad (IIIT‑H). The fourth‑year student’s paper on the etymology of AI‑related keywords – co‑authored with Human‑Centered Systems Research Centre (HSRC) advisor Dr. Aakansha Natani – garnered sharp interest from both AI experts and social scientists. This recognition earned him an all‑expenses‑paid ticket to the second annual conference of the International Association for Safe & Ethical AI (IASEAI), hosted at UNESCO House in Paris from February 24–26, 2026.

For Sujal, an affable and outgoing engineering student by day and a philosophy‑minded researcher by night, the journey to Paris was as much a story of chance and perseverance as it was of academic merit.

A dramatic beginning to a dream trip

The path to the IASEAI podium was neither linear nor assured. In late 2025, Sujal was preparing to submit his conference paper when the looming deadline tested his time‑management skills. “On the day of the deadline, everything was ready,” Sujal recalls, “and I just had to hit the ‘Submit’ button.”

Caught up in the high‑energy chaos of Megathon, IIIT‑H’s flagship hackathon, Sujal lost track of the clock and missed the 6 PM cutoff. “We won the hackathon, but I realized I had overshot the conference deadline,” he admits.

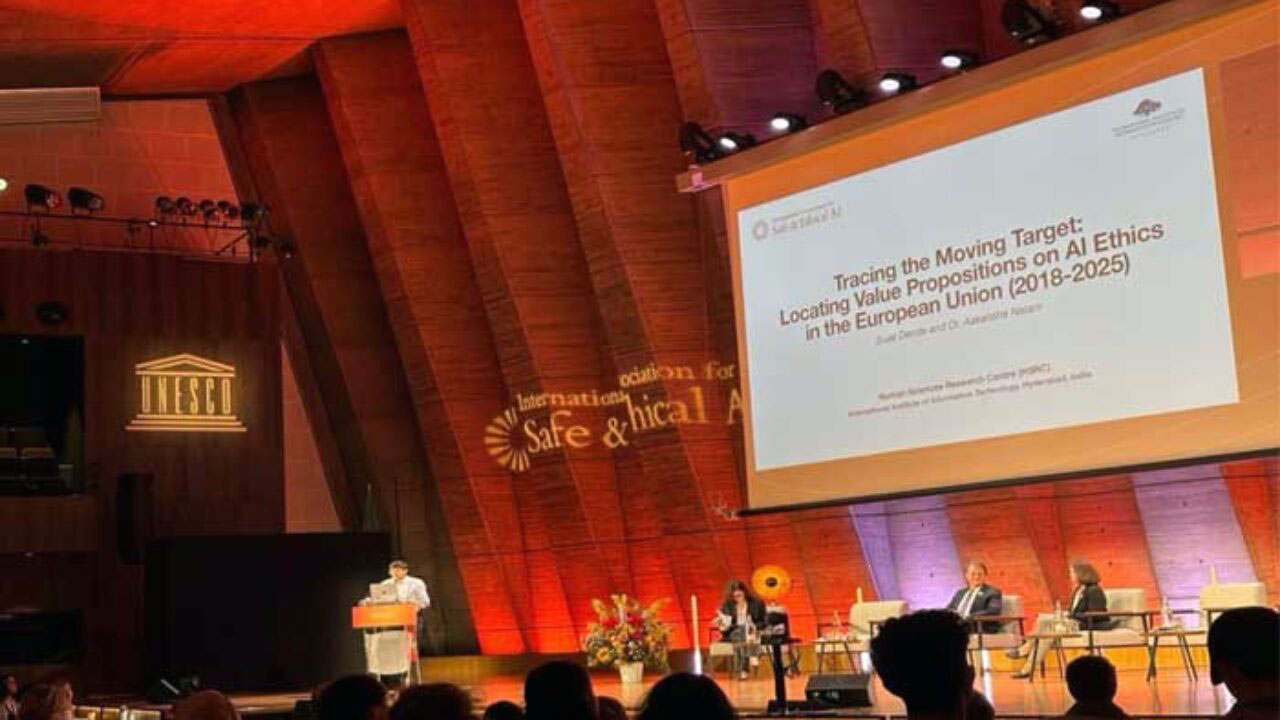

What followed was a swift email to the organizers, a brief moment of heart‑in‑mouth anxiety, and a surprisingly prompt, positive response within two hours. By mid‑December, Sujal learned that his paper, titled “Tracing the Moving Target: Locating Value Propositions on AI Ethics in the European Union,” had been accepted for presentation. Then came the clincher: the venue would be UNESCO House in Paris.

The conference: safe, ethical AI on a global stage

The International Association for Safe & Ethical AI (IASEAI), pronounced “I‑see‑eye,” is an independent nonprofit dedicated to keeping the fast‑evolving AI ecosystem aligned with human well‑being. The Paris 2026 conference brought together professors, researchers, industry leaders, and policy experts from across the globe to debate risks, red flags, and governance mechanisms for AI that can benefit humanity rather than harm it.

Sujal remembers the venue vividly: the imposing UNESCO building near the Eiffel Tower, the bustle of delegates, and the small but meaningful detail of national flags from almost every country fluttering inside the hall. “Seeing the flags of so many nations together really struck me,” he says. “It felt like a space where books‑to‑bedroom‑level research could meet real‑world policy.”

Panel sessions and workshops featured a mix of disciplines – computer scientists described model architectures, data scientists discussed bias and fairness, and philosophers debated the moral implications of autonomous systems. Industry teams from Google, Anthropic, Infosys, and other organizations shared insights from real‑world deployments.

Sujal’s niche: language, policy, and AI

Sujal is pursuing a 5‑year Dual Degree at IIIT‑H – a B.Tech in Computer Science and a Master of Science in Computing and Human Sciences by Research. The program immerses students in the human‑centred side of technology and gives them tools to think critically about ethics, governance, and user experience alongside hardcore coding and systems design.

Under the guidance of Dr. Aakansha Natani, Sujal’s research began to crystallize around a simple but powerful question: How do AI‑related concepts evolve in policy and rhetoric? Dr. Natani introduced him to a dataset of European Union AI and digital‑strategy documents and encouraged him to trace how emerging terms shaped governance.

“I noticed that everyone around me – friends, labmates, and even senior researchers – kept using phrases like ‘explainable AI,’ ‘safe AI,’ ‘green AI,’ ‘human‑centric AI,’ ‘carbon‑efficient AI,’ and ‘trustworthy AI,’” Sujal observes. “These terms were everywhere, but they weren’t being defined in the same way. That made me curious about how they travel from casual conversation into policy.”

From this observation grew the framework of his paper.

‘Tracing the Moving Target’ – mapping AI ethics in the EU

Sujal’s work focuses on the European Union, one of the first major political blocs to develop formal frameworks for AI safety and ethics. He analyzed EU documents from 2018 to 2025, treating the language around AI as its own kind of evolving ecosystem.

For each keyword – “explainable AI,” “safe AI,” “human‑centric AI,” and others – he tracked:

- Which institution first used the term,

- In what year it appeared,

- How often it was repeated, and

- Whether it led to formal laws, draft acts, or merely soft‑commitment guidelines.

His thesis, broadly, is that AI ethics is a “moving target”: the values and priorities embedded in AI‑related language shift over time, influenced by scandals, technological breakthroughs, and political debates.

“From a policy perspective, I began asking: which body in the EU first introduced this concept, and in what context?” he explains. “Was it a think tank, a research group, or a regulatory agency? Did the term stay at the rhetorical level, or did it slowly become embedded in legislation?”

His analysis suggests that, while the EU has been proactive in articulating AI‑related norms, the transition from language to concrete, actionable governance tools is still incomplete. “Bureaucrats often treat labels like ‘safe AI’ as buzzwords,” Sujal notes. “Researchers, on the other hand, struggle to translate those values into measurable metrics. There is a clear gap between the language of policy and the tools of practice.”

A multidisciplinary panel in Paris

The highlight of Sujal’s participation was his panel, titled “Human Values and Social Norms in the Age of Artificial Intelligence.” The session brought together computer scientists, sociologists, philosophers, and industry practitioners to explore how AI is reshaping social norms, democratic processes, and everyday life.

Computer scientists spoke about model architectures and risk‑mitigation strategies, while sociologists described how AI interacts with regimes – both democratic and authoritarian – and how it affects public trust and polarisation. Industry leaders shared case studies from real‑world deployments, highlighting the difficulty of balancing performance, efficiency, and ethical constraints.

Sujal’s contribution anchored the conversation in the language of policy and the role of keywords in shaping debates. He described how terms like “trustworthy AI” or “ethical AI” travel from research labs into draft legislation, often changing meaning along the way.

“Before my presentation, I was nervous,” he admits. “The panel had experts from very well‑known institutions, and I was a student from India.” The panel chair, Ms. Songya, and a fellow speaker, Professor Nathan Miller, noticed his tension and offered reassurance. “They told me I had prepared well, and that my perspective was valuable because it was grounded in real research rather than abstraction,” Sujal recalls.

During the discussion, he engaged in a spirited exchange with a senior data‑company executive. “The conversation was about aligning AI practice with shared values,” he says. “We agreed that the challenge is not just to build safe systems, but to build them in a way that is explainable, accountable, and inclusive.”

A Paris glass‑full of culture and croissants

Beyond the conference hall, Paris gave Sujal a fresh lens on life itself. “The first thing I bought in Paris was a croissant,” he laughs. What began as a five‑day professional trip became a longer stay, partly to explore the city.

His hotel, near the Arc de Triomphe, was upgraded during his extended stay—an unexpected perk that made the experience feel even more special. Journaling, scrapbooking, and strolling through the streets became his way of soaking in the city’s rhythm. “Binge‑watching Emily in Paris didn’t give me the full picture,” he reflects. “Walking through the Luxembourg Gardens, standing in front of the Eiffel Tower, and using the Citymapper app to navigate the metro felt like coming into a different world.”

The conference food – available under canopies with maps and cloth napkins – added a distinctive Parisian flavour to the experience. “Granny Smith apple sandwiches with walnuts, honey, and goat cheese were among the highlights,” he recalls. “The evening sundowners were elegant but informal, which encouraged more conversation.”

Lessons from the global AI community

The most enduring lesson from the conference, Sujal says, is the importance of not waiting for a crisis before regulating AI. One observation that stayed with him came from Professor Stuart Russell, one of the event’s organisers and a leading AI safety thinker.

“Professor Russell pointed out that the world only decided to regulate nuclear technology seriously after the bombs fell on Hiroshima and Nagasaki,” Sujal explains. “With AI, we already know the potential harms. We should not wait for a similar catastrophe before we act.”

That sentiment resonated throughout the conference hall. “Many people in the audience were already thinking about the need for independent regulatory bodies, transparency frameworks, and strong red‑lines on AI deployment,” he notes. “The conversation is no longer only about what AI can do, but also about what it should not be allowed to do.”

From IIIT‑H campus to the global stage

Back at IIIT‑Hyderabad, Sujal traces his Paris‑bound journey not just to his research, but also to the campus community that shaped his skills. “I loved meeting people, and that helped me network at the conference,” he says.

In his early years, he was cautious about balancing academics and extracurriculars, but once he saw his grades holding up, he dove into campus life. He served as the coordinator of the Art Society, a member of the dance crew, marketing head of the college magazine, design head of the college fest, and captain of Vayu House.

“Dancing, casual conversations, and creative work are my stress‑busting tools,” he explains. “Origami, sketching, and painting are calming. They help me think more clearly when I am back at my laptop, coding or writing.”

He credits Dr. Aakansha and his labmates, Nanda Rajiv and Siddhi Wadekar, with being the backbone of his success. Weekly lab meetings, candid feedback, and late‑night conversations over ideas pushed him to refine his work. “Without them, this would not have been possible,” he says. “The discussions we had in the lab meant the world to me.”

What lies ahead

Sujal does not yet know exactly where his research will take him, but he knows he wants to stay at the intersection of AI, language, and policy. The IASEAI conference opened his eyes to the many unanswered questions in AI ethics:

- How to track the evolution of norms in AI‑related discourse,

- How to measure the “ethical performance” of AI systems, and

- How to build governance frameworks that are both technically rigorous and socially just.

He intends to continue exploring these themes while at IIIT‑H, and to contribute to global conversations that shape the future of AI.

“Interacting with all those stakeholders in one place made me see the big picture,” Sujal concludes. “If AI is to shape how we live, work, and think, we must be very careful about how we frame it in words – and in policy. Because when it comes to AI, words truly carry weight.”

Disclaimer

The information in this article is based on available public sources and official statements as of the time of publication. While we aim for accuracy, we do not guarantee completeness or correctness. Readers are advised to verify key details from official sources before making any decisions. The website (iitiimsamvaad.com) and its authors are not liable for any loss or damage arising from the use of this content.